AI is fundamentally redefining leadership by providing new tools, frameworks, and systems that allow leaders not just to manage complexity, but to see, challenge, and reshape their organizations in ways never before possible. The competitive mandate for leaders is clear: harness AI not merely for efficiency, but as an engine for deeper self-awareness, structured dissent, and proactive sensing that unlocks true organizational agility and resilience.

Strategic Frameworks for Next-Gen AI Leadership

Forward-thinking leaders are moving beyond pilot projects and isolated automation to experiment with new, holistic approaches—many inspired by concepts like the Leadership Mirror, Red-Team Loop, and Organization Pulse Monitor. These paradigms operationalize AI in ways that directly address the perennial blind spots, biases, and inertia that often undermine executive decision-making.

George Yang- helping organizations and executives embrace AI.

The Leadership Mirror: Cultivating Radical Self-Awareness

The Leadership Mirror uses AI to continuously analyze leadership communication, decision rationale, and team interactions, surfacing insights that are often overlooked or difficult for humans to acknowledge. For example, Microsoft has begun leveraging AI tools to track who dominates meetings, which voices get systematically dismissed, and when evidence is overridden by intuition—creating dashboards that encourage leaders to confront uncomfortable patterns.

- This approach helps leaders challenge their own narrative, improve inclusiveness, and drive more thoughtful debate.

- With AI’s ability to process language in real time, leaders can receive feedback loops and “reflections” that support a culture of deliberate, transparent leadership.

- The Leadership Mirror is also a vehicle for mitigating the “competence penalty,” where women and older workers face skepticism for using AI—even when it enhances productivity. By surfacing evidence of expertise and impact, it reduces bias and builds psychological safety.

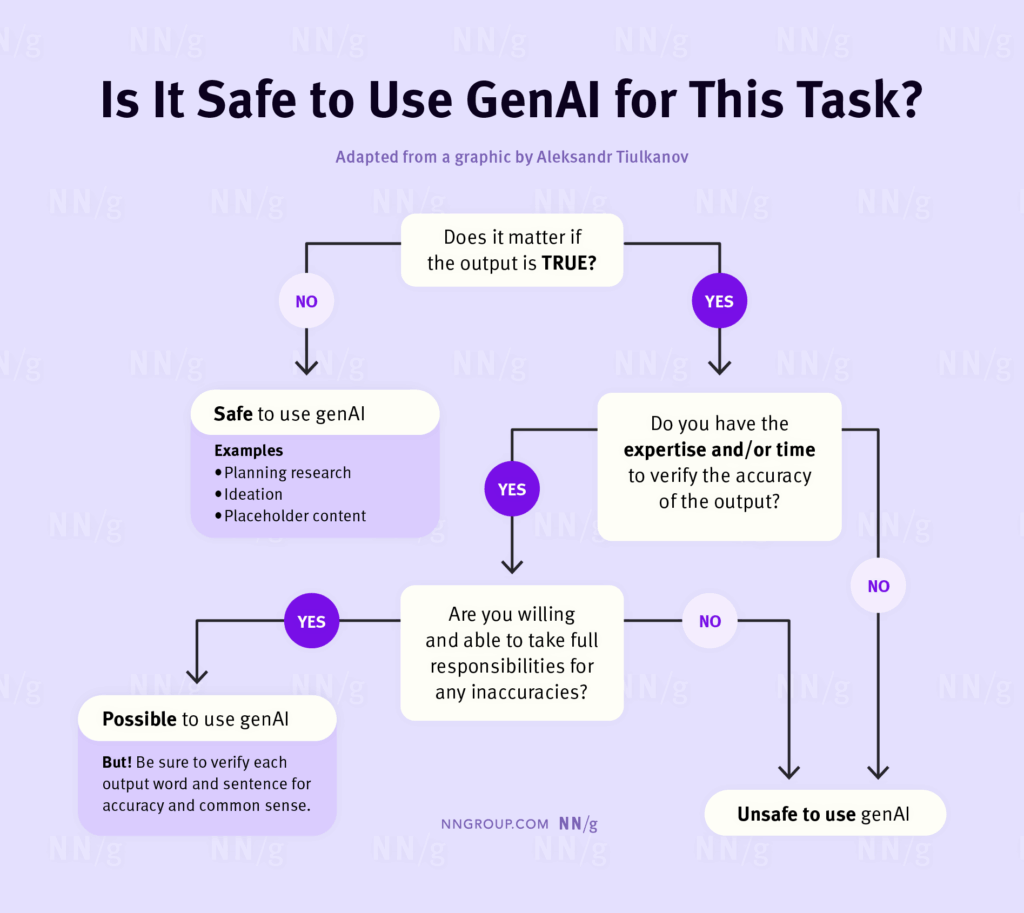

There are different types of AI including less sophisticated models such as Generative AI. To decide whether to use generative artificial intelligence for a task, ask yourself whether it matters if the output is true and you have the expertise to verify the tool’s output. (Adapted from Aleksandr Tiulkanov‘s LinkedIn post)

The Red-Team Loop: Embedding Structured Dissent

To counter groupthink and executive overconfidence, Red-Team Loop systems employ AI to automate adversarial reviews of strategy and operational decisions. Verizon, for instance, uses an AI framework that captures assumptions, risks, and anticipated outcomes for major decisions, then generates simulated critiques and alternative scenarios—sometimes challenging senior executives on blind spots they themselves hadn’t recognized.

- By proactively “red-teaming” their own decisions, leaders foster a culture where dissent is routine, rational, and data-driven—not ad hoc or punitive.

- The approach is especially valuable in M&A, crisis management, and product launches, where high-stakes, high-ambiguity decisions benefit from rigorous challenge.

- Leading boards now expect Red-Team Loops as part of their fiduciary duty, recognizing that the cost of missed risks is measured not just in dollars, but reputation and long-term viability.

Organization Pulse Monitor: Proactive Sensing for Culture and Risk

The Organization Pulse Monitor uses AI to detect weak signals in organization culture, ethical risk, and operational friction long before traditional metrics or surveys would register them. Some organizations have begun linking AI-powered sentiment analysis of internal communications, workflow behaviors, and network interactions to predict where a culture may be straining, where compliance risks are emerging, or where silent dissent is brewing.

- When Pulse Monitors flagged drops in engagement and early warning signs of burnout, one multinational fast-tracked well-being interventions, pre-empting attrition.

- AI-driven pulse scans also help surface ethical risks—such as exclusionary behaviors or data privacy concerns—enabling leaders to respond immediately, not months later.

Actionable Strategies: Bringing AI Experiments to Leadership

How can senior leaders experiment and innovate with these systems while maximizing value and minimizing risk?

- Map Adoption Hotspots and Blind Spots: Use mirror and pulse data to identify where AI is catalyzing positive behaviors—and where competence penalties or shadow AI usage may be undermining equity or performance. Target interventions accordingly.

- Mobilize Role Model Leaders: Encourage respected senior leaders, particularly those from underrepresented demographics, to visibly experiment with and champion AI tools. Research shows that when these role models use AI openly, adoption gaps shrink, and psychological safety rises.

- Redesign Evaluation and Disclosure Policies: Shift performance metrics from subjective ratings of proficiency to objective impact, cycle time, accuracy, and innovation. Blind reviews and private feedback mechanisms can reduce bias against AI users and drive fairer rewards.

- Embed Structured Red-Teaming in Decision Flows: Institutionalize adversarial testing of key decisions, making AI-enabled dissent a standard step—not a threat or afterthought. Leaders should receive regular “contrarian” insights, not just consensus-building reports.

Common Pitfalls and Human Impact

Despite rising investment, less than one-third of US employers believe staff are equipped for critical thinking in the AI era, and only 16% of American workers use AI on the job despite widespread availability. The main barriers are not just technical, but social: competence penalties, fear of reputation loss, and resistance among influential skeptics.

- Competence Penalty: AI users, especially women and older employees, may face a perception of diminished competence. This undermines adoption and can exacerbate workplace inequality.

- Shadow AI and Hidden Risks: Employees sometimes use unauthorized tools to bypass bias, exposing the organization to compliance, reputational, and security risk.

- Skill Gaps vs. Work Context: Traditional training falls short without tailored, role-specific feedback loops—AI tutors offer scalable, personal learning but must be embedded in daily workflow, not delivered in isolation.

Governance, Ethics, and Sustainable Change

Human-centered leadership isn’t optional—it’s a strategic imperative. Boards and executives must be proactive in:

- Instituting transparent governance for all AI systems (mirrors, loops, monitors), with clear oversight on privacy, fairness, and impact.

- Ensuring structured role-modeling and psychological safety—particularly for vulnerable groups confronting competence penalties.

- Making change management a continuous process, with AI as both coach and sentinel, not just a dashboard.

The call to action for C-suite leaders is urgent and profound: treat responsible, experimental, and self-critical AI adoption as the core discipline of next-generation leadership. Not just for efficiency, but for building organizations where insight, challenge, and well-being are sustainably enabled. Those who master the trifecta of mirror, loop, and pulse will set the new standard for profitable, human-centered growth in the age of AI.

More about:

George Yang is a Toronto-based digital innovator and AI adoption strategist with over 15 years of experience in marketing and digital transformation. As Chair of the AI Working Group at the National Payroll Institute, he helps organizations translate AI strategy into measurable business outcomes. George is passionate about making AI adoption ethical, practical, and impactful, bridging the gap between innovation and implementation across industries. georgeyang.ca