April, 2026 – Capital spending on AI has surged into the hundreds of billions, yet the economic payoff remains elusive. Despite rapid investment and widespread experimentation with generative AI tools, measurable productivity gains have yet to show up clearly in aggregate data across advanced economies – raising an important question: who will be first to turn this momentum into measurable gains?

In “From Hype to Output: How AI Investment Translates to Real Productivity Gains,” I discovered that while AI offers a path to stronger economic performance and could help address Canada’s long-standing productivity challenges, realizing these gains will require policies that accelerate business adoption and diffusion, which remain uneven across most industries and regions. This includes removing barriers, ensuring access to data resources and encouraging integration across firms and sectors.

For the Silo, Rosalie Wyonch/C.D. Howe

- Artificial intelligence has renewed optimism about improving Canada’s long-standing productivity problem, but history shows that general-purpose technologies often take decades to produce measurable economy-wide gains. Early adoption typically produces modest improvements as firms experiment and invest in complementary assets such as data, skills, and organizational changes.

- Canada performs reasonably well in international AI rankings and produces strong research output, but it lags leading countries in computing capacity and commercialization. Canadians are among the most frequent users of generative AI tools globally, yet business adoption remains uneven and relatively limited.

- Canadian data show that about 12 percent of businesses currently use AI, and most report little change in employment following adoption. Many firms say AI is not relevant to their operations or lack the knowledge needed to implement it effectively, suggesting adoption barriers remain significant.

- Policy should therefore prioritize accelerating AI adoption and diffusion across sectors while maintaining Canada’s research and infrastructure capacity. Governments can support this by improving regulatory clarity, expanding data access, strengthening AI literacy, and encouraging firms to experiment and integrate AI into their operations.

Introduction

Canada faces a persistent productivity challenge.

Growth in output per work-hour has stagnated for decades, and the gap with the United States continues to widen.1 Policymakers have long sought levers to reverse this trend, and the rapid emergence of artificial intelligence (AI) – particularly generative AI since late 2022 – has prompted renewed optimism that a transformative technology might finally deliver the productivity gains that have proved elusive.

This Commentary discusses the impacts of AI technologies, with a focus on more recent generative AI. In particular, these technologies are unique in their low barriers to adoption and use in terms of both skill and cost as generalized tools. With such low barriers to entry, there is potential for rapid adoption and scaling of AI technologies across many sectors of the economy. Yet enthusiasm must be tempered by evidence: general-purpose technologies (such as AI) historically take decades to reshape economies, and the path from adoption to measurable productivity growth is neither linear nor guaranteed.

This Commentary examines AI’s potential contribution to Canadian productivity growth through an evidence-based policy lens. It begins by establishing what AI technologies are and how technological change translates into economic output, drawing on the concept of the “productivity J-curve” to explain why early-stage adoption often fails to register in macroeconomic statistics. The paper then situates the current AI investment boom in a historical and international context and summarizes research quantifying both the infrastructure-driven and adoption-driven channels through which AI may affect GDP. A comparative analysis positions Canada among its international peers, revealing a paradox: world-class research output coexists with middling commercial translation and infrastructure capacity. Detailed examination of Canadian business adoption data shows significant variation across industries and provinces, with early signs that initial experimentation may be giving way to more selective, sustained implementation.

The central argument is that maximizing the likelihood that AI technologies will boost Canada’s productivity growth requires policy focused on accelerating AI adoption and diffusion across sectors. While government investment in computing capacity supports the domestic development of AI technologies (and democratizes access to computing power), government resource constraints, significant uncertainty about AI development trajectories, and the relatively smaller size of the Canadian AI economy suggest that AI infrastructure policies should focus on maintaining and improving Canada’s relative international competitiveness. Policies that encourage firms to experiment, learn, and eventually reimagine their operations around AI capabilities can position Canada to capture productivity gains as the technology matures. AI, as a generalized technology, can be deployed across many industries and is linked to other economic development and industrial policy priorities, including small- and medium-sized business growth and international trade diversification. This paper concludes with policy recommendations that balance the uncertain timeline of AI’s macroeconomic impact against the risk of falling further behind more aggressive international competitors.

The Current AI Boom in Context

The emergence of generative AI since late 2022 has triggered an unprecedented wave of capital investment and rapid enterprise adoption. This technological shift is reshaping economic growth dynamics through two channels: the direct contribution of infrastructure investment to GDP and longer-term productivity gains from AI adoption across industries. Understanding the magnitude, timing, and distribution of these effects is essential for policymakers seeking to position their economies to benefit from AI’s transformative potential. Much of this section refers to the US economy, where more data on AI investment and GDP effects are available.

Capital expenditure on AI infrastructure has reached historic proportions. Hyperscaler companies – Amazon, Google, Meta, Microsoft, and Oracle – allocated about $342 billion to capital expenditures in 2025, a 62 percent increase from the previous year (Aliaga 2025). Estimates suggest AI-related capital expenditures could reach US$527 billion in 2026 (Goldman Sachs 2025). This spending encompasses semiconductors, data centres, networking equipment, and the power infrastructure required to support “compute”-intensive AI workloads.

In Canada, the federal government announced $2 billion over five years starting in 2024-25 for the Canadian Sovereign AI Strategy, including $705 million for “compute” infrastructure. Microsoft has also announced it is spending $19 billion on AI infrastructure investment in Canada between 2023 and 2027, with $7.5 billion of that over the next two years (Smith 2025). Industry categories related to AI2 accounted for $195 billion in 2021 and grew to $229 billion in 2024, representing 9.3 to 10 percent of Canada’s GDP, respectively.

The impact of AI investment on the US economy is significant, though estimates vary widely. In the first nine months of 2025, GDP product categories related to AI investment (such as computing and communications equipment, data centre structures, software, and research and development) represented 37 percent of real GDP growth and 8 percent of real GDP (Levine 2025).3 Other estimates suggest AI contributed roughly 1 percentage point to US GDP growth in 2025 (Boussour 2025; Goldman Sachs 2025; Aliaga 2025), making it the second-largest contributor to growth after consumption (Singh 2026).4 The scale of investment in AI infrastructure in the US has led to debate about whether it is fueling an equity market bubble (BNP Paribas 2025; Barnette and Peterson 2025). There have been multiple “AI winters” in the past – periods of significant decline in enthusiasm, funding, and progress in the field of AI.5

However, much of the spending on AI infrastructure does not translate directly into domestic GDP because many inputs are imported – for example, semiconductors manufactured in Taiwan. This is particularly important for Canada, since both infrastructure inputs and the outputs of dominant AI companies (AI products and software) are predominantly located abroad. Secondary economic effects and labour dynamics associated with the expansion of infrastructure investment are also important to consider. Data centre construction has had significant impacts on the construction industry in the United States. The top 50 contractors by size have doubled revenues within a year, and salaries for trades workers are 32 percent higher for data centre projects compared to other construction (Paoli 2025). Once built, however, data centres require relatively few permanent workers.

There are also counter-balancing factors to consider. Corrado et al. (2025) show that while investment in data as an intangible asset can improve efficiency, the growing importance of proprietary datasets slows the diffusion of innovation. So far, the negative impact on diffusion outweighs the efficiency gains from intangible data capital.

Currently, the main channel through which AI is measurably increasing US GDP is capital expenditures. Over the longer term, however, these investments must translate to products and services that meaningfully increase productivity to have a sustained effect on growth. So far, the acceleration of AI development and diffusion has not been associated with higher productivity growth at the macroeconomic level across G7 countries (Filippucci et al. 2024; Andre and Gal 2024; Goldin et al. 2024). As with earlier general-purpose technologies, widespread productivity gains may take years to materialize.

Estimates of AI’s long-term productivity impact for the US vary widely – from 1.5 percent labour-productivity growth annually (Goldman Sachs 2025) to less than 1 percent cumulatively over a decade (Acemoglu 2024), as shown in Figure 1. Some researchers argue that the long-run impact may instead arise from persistently faster growth rather than a one-time productivity shift (Baily, Brynjolfsson, and Korinek 2023). The timeline and magnitude of significant macroeconomic growth related to AI adoption depend on continued improvements in AI capabilities, widespread adoption, and complementarities with human skills and other technologies (Filippucci et al. 2024).

AI Already Affecting Labour Market

There is some evidence that AI is already having labour market effects: Brynjolfsson, Chander, and Chen (2025) estimate that early career workers in AI-exposed occupations experience a 16 percent relative employment decline while employment remained stable for more experienced workers. Across occupational categories in the US, 2.6-75.5 percent of occupations could have at least 50 percent of their tasks affected by GenAI (Arnon 2025). Research on the UK labour market finds that 11 percent of occupational tasks are exposed to generative AI automation/augmentation as part of “low-hanging fruit” implementation cases, and up to 59 percent of tasks will be exposed to Gen-AI as it develops into more integrated systems (Jung and Desikan, 2024). Heneseke et al. (2025) find that the price premium for AI-exposed tasks declined by 12 percent from 2017 to 2023/24 and that job postings were 5.5 percent lower in 2025-Q2 than if pre-GPT hiring patterns had persisted.

As AI technology is adopted, some labour market disruption is inevitable, but that does not mean that AI necessarily leads to widespread unemployment. Job losses can occur only if innovation outstrips growth in demand for new products and services. Further, the potential for automation does not necessarily translate into actual automation: the decision to automate depends on factors such as firm size, competitive pressure, and the cost of a machine versus the cost of human labour. As technology diffuses and improves productivity, GDP and wages increase, which increases demand for goods and services, creating new employment. Rapid technological progress is regularly accompanied by fears of widespread unemployment. In reality, however, markets adjust dynamically to technology adoption over time, and widespread unemployment is unlikely (Oschinski and Wyonch 2017).6

At the firm level, growing evidence suggests AI can improve labour productivity in many contexts (Figure 2, Table A1). Meta-analysis of research measuring the productivity effects of generative AI shows mixed results across studies and applications. But on average, the use of GenAI tools increased labour productivity (in particular, tasks or departments) by 17 percent (Coupe and Wu 2025).

Despite these gains, there is a notable divide in the wide adoption of tools like ChatGPT and enterprise-grade AI systems (Challapally et al. 2025). Nearly 80 percent of organizations report exploring or piloting large language model (LLM) products, and 40 percent report deployment. These tools primarily enhance individual worker productivity but are not deployed for core business functions.7 By contrast, 60 percent of companies have evaluated enterprise or custom systems, yet only 5 percent reach production.

Tools that succeeded had low configuration barriers and immediately noticeable value, while those that require extensive upfront customization often stalled in the pilot stage. The core barrier to scaling is not infrastructure, regulation, or talent. It is GenAI’s lack of capacity to learn or remember over time (particularly static enterprise tools relative to rapidly evolving consumer-facing tools).8 Aside from enterprise-level adoption and development, there is significant adoption of AI technologies by individual workers to enhance personal productivity. In 2024, 40 percent of the working-age population in the US was using GenAI, 23 percent used it for work, and 9 percent used it every workday (Bick, Blandin, and Deming 2025). The high failure rate of AI pilots can be explained by the productivity J-curve and the current stage of development and adoption (intangible capital accumulation that leads to future productivity improvement). A high failure rate of pilots shows experimentation and contributes to ongoing development on the one hand. On the other, companies that experiment with AI and abandon it might be less likely to adopt it in the future, despite rapid evolution.

Uneven Gains

Productivity gains from new technologies are uneven across sectors and difficult to predict because they depend on where technological change occurs.9 Adoption is also shaped by market competition, regulation, and the current distribution of technology within industries (Howitt 2015). Given the uncertainty around AI’s trajectory and the sectors where it may generate the largest gains, productivity improvements are possible, but expectations may also exceed outcomes. Bridging from the initial development and experimentation phase to widespread adoption, eventual integration and process changes can be accelerated by addressing technical, institutional, and bureaucratic barriers (Ouimetter, Teather, and Allison 2024). Empirical modelling suggests that people, process, and data readiness are required in addition to technology/capital investment to achieve long term operational success (Uren and Edwards 2023). Government policy should be economically broad-based and not narrowly focused on particular industries or applications. It should also be cautious and balance uncertain long-run productivity gains with maintaining competitiveness in a rapidly developing global technology market.

How Canada Compares Internationally

Canada performs reasonably well in international AI rankings, placing eighth overall across five different international AI rankings (Figure 3).10 Rankings vary widely depending on their focus and data sources (Table A3). For example, China’s rank ranges from second in the Global AI Index ranking but 32nd in the Global Index on Responsible AI (GCG 2024). Canada ranks highest in the Global AI Index (eighth) and lowest on the IMF’s AI Preparedness Index (18th). Canada has also fallen in the rankings over time – from fourth in 2021 to eighth in 2025 on the Global AI Index (White and Cesareo 2025) and from fifth in 2022 to 12th in 2025 on the Government AI Readiness ranking (Rogerson et al. 2023; Iida et al. 2026). Notably, Canada’s score improved across categories measured in the Oxford AI Readiness Index, suggesting that advancements in AI adoption, development, and public policy have been made, but they have not kept pace with some international peers.

In terms of development and infrastructure, Canada is a high performer, but not a clear leader among the metrics tracked by the OECD’s AI Policy Observatory. More AI research is produced in Canada than in the US or UK (relative to population size). Canada has higher venture capital investment (as a share of GDP) in AI startups than many peer countries, but falls far behind the US and Israel, the clear leaders in the category (OECD.AI 2026a,b). Canada also maintains a respectable presence in computing infrastructure, with 19 of the world’s top 500 supercomputers (Top500 2025) and public cloud regions (Lehdonvirta et al. 2025). Unfortunately, the processing power of those computers ranks only 18th out of 20 comparator countries (Top500 2025).

Strong Research But Weak Commercialization For Canada

Taken together, these indicators reflect a familiar pattern in Canada’s innovation system: strong research output but weaker commercialization. Continued investment in data and processing infrastructure will help maintain competitiveness, but public policy should also encourage private investment.11 Given the scale of investment in countries such as the United States and China, Canada is unlikely to match their computing capacity regardless of policy choices.

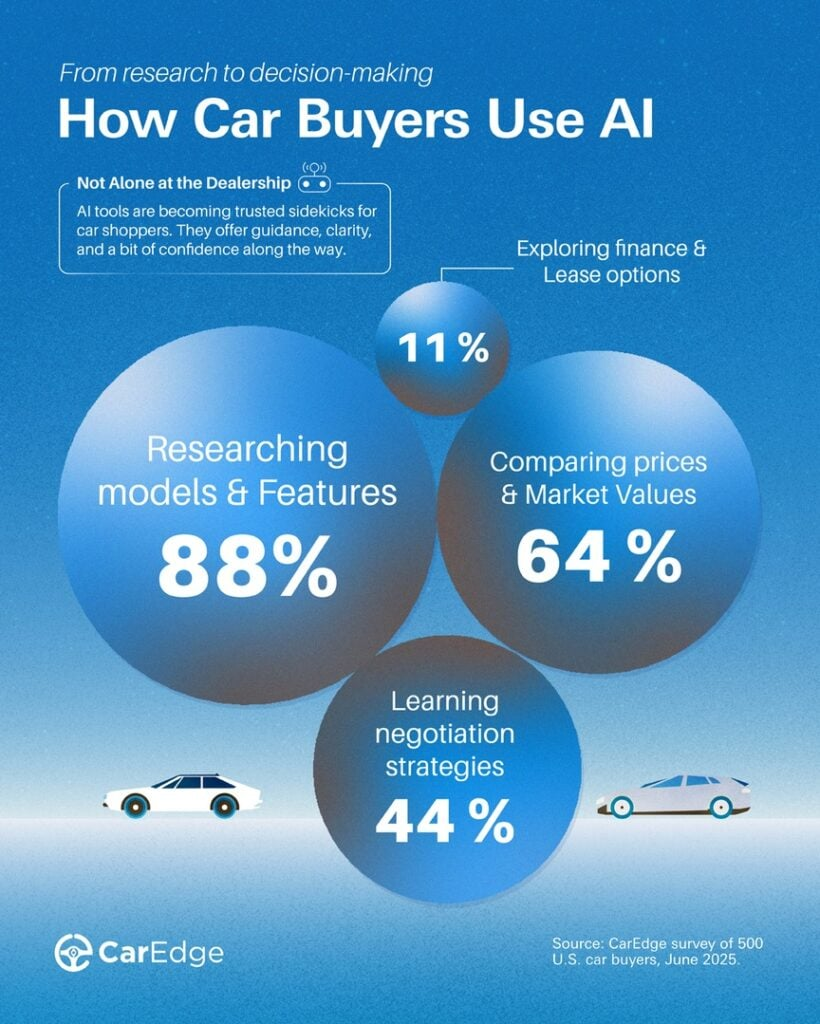

Evaluating the usage of major LLMs by country shows that the US and India account for the largest proportions of total users and interactions. After accounting for population size, however, Canada, Australia, and France show the highest usage rates among individuals (Figure 4).12 While individual use does not directly translate to higher productivity in the production of goods and services, familiarity and comfort with AI tools among the general labour force will improve enterprise adoption prospects. A labour force with AI skills reduces enterprise training costs related to adoption and improves the likelihood of employees generating ideas for reorganizing business processes. Conversely, differences between consumer and enterprise applications can create barriers to workplace adoption (Challapally et al. 2025). Even so, if individual workers are using AI tools to increase their personal productivity, there will be marginal productivity effects from using publicly available tools for work tasks.

High individual adoption in Canada contrasts with lower rates of business adoption.

Based on the most recent comparable data, Canada falls below the OECD average overall and for the proportion of medium and large businesses adopting AI (Figure 5). In Korea, Denmark, and Finland, more than half of large companies are using AI.

International data on the businesses’ adoption of AI is highly variable. The OECD estimates 20.2 percent of businesses (with more than 10 employees) use AI,13 while the McKinsey Global Survey finds that 88 percent of businesses experiment with AI in at least one function (McKinsey 2025; OECD 2025). However, only 10 percent of businesses in G7 countries were using AI for core business functions in 2024. Only 2 to 6 percent of firms across G7 countries have high intensity adoption related to core business functions (Fillipucci et al. 2025). High-intensity adoption is highest in the US, followed by Canada, the UK, and Germany.

There are common features of AI adoption across countries:

- Firm size: larger businesses are using AI at higher rates than medium or small businesses.14 This could be related to factors including fixed costs of adoption, larger data resources, lower financing constraints, and complementary intangible assets such as information and communication technology (ICT) skills and research capacity (Calvino and Fontanelli 2023).

- Industry concentration: adoption is highest in ICT, followed by professional, scientific and technical services, and communications and marketing (OECD 2025).15

- Firm characteristics: higher productivity and younger enterprises are more likely to adopt AI (Calvino and Fontanelli 2023; Calvino, Costa, and Haerie, forthcoming).16

Modelled projections suggest that high-intensity AI adoption among enterprises could reach 13 to 63 percent across G7 countries over the next decade, depending on adoption rates and technological progress (Fillipucci et al. 2025). The US is projected to have the highest adoption rates and the largest annual productivity growth related to AI, while Japan is projected to see the lowest. Canada ranks second in projected adoption across scenarios, but third or fourth in projected labour productivity growth, meaning that productivity gains will likely be relatively modest compared to international peers with similar (and slightly lower) adoption rates (Figure 6).17 Estimates suggest that Canada will reach 32-62 percent enterprise adoption in the next 10 years, contributing 0.35 to 1.13 percentage points to annual labour productivity growth.

Overall, available data show that Canada is about average in terms of adoption and development capacity, and the US is a clear leader. Canada has a lower capacity for development related to AI infrastructure than some peer countries, but remains above average. Canadian individuals have higher adoption rates than those in most other countries. Business adoption is less clear-cut. Some indicators place Canada below the international average, while others rank Canada second in the G7 for high-intensity AI adoption in core business functions. For Canada to close the productivity gap, it will need sufficient AI infrastructure to remain competitive, broad adoption, but most importantly, more rapid or effective AI-enabled innovation to develop adaptations of business processes and new products and services.

Canada’s AI Adoption Landscape

Having compared Canada with international peers, this section examines business adoption within Canada.

In the third quarter of 2025, 14.5 percent of businesses were planning to use AI within the next 12 months, a 3.9 percentage point increase from the previous year. As of the second quarter of 2025, 12.2 percent were already using AI. But two-thirds of businesses report no plans to adopt AI, and 18.9 percent were uncertain (Bryan, Sood, and Johnston 2025a). About six in 10 businesses think that AI investment is not important or not relevant to their business.

Planned adoption is highest in Ontario, British Columbia, Nova Scotia, and New Brunswick, with New Brunswick seeing the largest growth in the proportion of businesses planning to use AI compared to the previous year (Figure 7). Adoption is highest in information and cultural industries; finance and insurance; professional, scientific, and technical services; and healthcare and social services. It is lowest in mining and resources extraction, transportation and warehousing, and construction (Table A1).

Comparing previous and planned AI use shows that the share of businesses planning to adopt AI over the next 12 months is only 2.3 percentage points higher, compared with a 6.1 percentage-point increase from 2024 to 2025. In several sectors – including manufacturing, resource extraction, wholesale and retail trade, and professional services – planned adoption is lower than current usage levels in Q2 2025 (Table 1). Adoption is most likely to increase in accommodation, food services, and real estate over the next year.

Provincial patterns are also uneven. Planned AI use in the information and cultural industries declines in five provinces (including a 39.7-percentage-point drop in New Brunswick) but rises in Ontario, Alberta, and Saskatchewan. In Q2 2025, businesses in Ontario, Quebec, and Manitoba were using AI at higher rates than the national average. By Q3 2026, however, only businesses in Ontario intend to use AI at higher rates than the national average. Businesses in Quebec and Alberta show little appetite for further AI adoption, while those in Prince Edward Island report plans to reduce AI use in 2026 compared with 2025.

Businesses engaging in some international activities are adopting AI at higher rates. Across all provinces, businesses that export services were using AI at higher rates than average. The same is true for importing services in all provinces except Nova Scotia. Businesses that relocated activities outside Canada or made investments outside Canada are also more likely to be using AI at the national level (though these same businesses show the largest declines in intended use over the coming year). Importing and exporting goods has no clear association with AI adoption across provinces.

Overall, businesses involved in international services trade or cross-border investment show higher rates of AI use. This gap will likely close slightly over the year: firms without international activities report higher intentions to adopt AI than the overall business average (3.6 percent compared with 2.3 percent). Meanwhile, firms relocating employees or operations abroad, or investing internationally, report declining intentions to expand AI use.

Canada’s AI adoption landscape (as of Q2 2025) generally mirrors international trends. Younger businesses (10 years or less) adopt AI more frequently than older firms, and large firms adopt more than small ones. The highest adoption rates are concentrated in ICT, finance, and professional, scientific, and technical services. Private enterprises had significantly higher adoption than government agencies and non-profits.

Planned adoption over the next year (reported in Q3 2025) suggests both continuation of these trends and broader diffusion. Government agencies and non-profits serving businesses plan to increase the use of AI at higher proportions than private enterprises. Only 0.1 percent of government agencies reported using AI in early 2025, but 24.3 percent plan on using it within the next year. Younger firms plan to continue to adopt AI at higher rates than older firms.

Planned adoption by firm size varies across provinces. In New Brunswick and Saskatchewan, small (5-19 employees) and medium-sized (20-99 employees) companies report adoption intentions above the national average, while larger firms show declining adoption. In Quebec, by contrast, larger firms continue to adopt AI more rapidly than smaller firms, widening the adoption gap.

Turning to employment effects, most businesses using AI report little change in staffing levels. Among firms that adopted AI in the previous 12 months (12.2 percent of businesses in Q2 2025), 89.4 percent reported no related change in employment (Bryan Sood and Johnston 2025b). Similarly, about 70 percent of firms planning to adopt AI expected no employment change, while another 10 percent are uncertain (Bryan Sood and Johnston 2025a). These results suggest firms may overestimate potential labour savings before implementation. After adoption, 15.1 percent of firms reported no reduction in employee tasks, while 47.2 percent reported only small impacts.

For businesses that do not plan to use AI in the next year, the most common reason is that the technology is not relevant to their products or services (78.1 percent). Only 1.5 percent of businesses said that previous use of AI did not meet expectations. Other common barriers include limited knowledge of AI, privacy, or security concerns, and the technology’s perceived immaturity. Industries with fewer firms reporting AI as irrelevant tend to report lack of knowledge as the primary barrier to adoption.18

Government agencies report the highest rates of disappointment with AI performance (14.8 percent citing unmet expectations as the reason for non-use).19 Regionally, businesses in British Columbia, Alberta, and Ontario report the lowest rates of saying AI is irrelevant to their activities (74.1-76.7 percent), while New Brunswick reports the highest share (88.3 percent). Notably, laws and regulations preventing or restricting the use of AI are one of the least commonly reported reasons for not using AI. This shows that existing industry regulations are not playing a strong role in prohibiting adoption in most industries.

Overall, Canadian data suggest that AI adoption continues to grow but at a slower pace than in 2024. In some sectors, AI use is likely to decline, suggesting that some businesses piloted or implemented AI and did not realize sufficient benefits to continue using the technology. While individuals in Canada use generative AI tools more frequently than those in many peer countries, business adoption remains comparatively limited, and most firms report little measurable labour savings from AI use so far.

Although these trends could indicate declining enthusiasm or unmet expectations, they are also consistent with the early stages of adoption for past general-purpose technologies, where experimentation precedes widespread productivity gains.20

Policy Discussion

Policymakers must recognize that maintaining Canada’s competitive standing requires deliberate, differentiated strategies for AI infrastructure, development, and adoption – objectives that demand distinct policy approaches, skill sets, and resources. While the country currently performs respectably in international rankings, declining relative performance across multiple indices signals cause for concern.

There is significant uncertainty in the trend observations within Canada and across countries – from the beginning of 2024 to the present, we have only two observations of business AI adoption and intended use (from the Canadian Survey of Business Conditions). The OECD international comparisons also depend on this singular survey, and the most recent publicly available Canadian data is for 2023. Given the rapid changes to AI adoption and development, and its potential to have significant growth and productivity effects, we have shockingly little information about how AI is being deployed over time. Statistics Canada is ending the Canadian Survey on Business Conditions (CSBC), with the last scheduled release in August 2026. Budget 2025 allocated $25 million over six years and $4.5 million ongoing for Statistics Canada to implement the AI and Technology Measurement Program (TechStat) to support data and insights to measure how AI is used by organizations and understand its impact on Canadians, the labour force, and the economy. There is significant value and need for national statistics agencies to develop a standardized approach to measuring AI adoption to compare rates across countries, sectors, and over time. This expansion in data monitoring of technology adoption will enable social and economic policy research and inform evidence-based policymaking.21

Temporal considerations must also inform policy design. The substantial productivity gains that AI promises remain, by most credible estimates, a decade or more away. Current measurable benefits, while real, derive primarily from infrastructure buildouts and the “replace” phase of technology adoption, wherein AI-enabled tools substitute for existing technologies within established workflows and processes. These incremental improvements, averaging around 17 percent in labour productivity across various studies, represent meaningful but modest gains. The transformative potential of AI will materialize only when industries advance to the “reimagine” phase, restructuring business models, production processes, and organizational architectures around AI capabilities. History suggests this transition requires substantial complementary investments in training, organizational restructuring, and supporting infrastructure – investments whose returns may not register in productivity statistics for years.

Infrastructure and Data

Data centres have near-immediate positive impacts on GDP and employment while they are being built, but then become an input to production themselves, while requiring significant energy inputs and minimal labour.22 This means that government expenditure directed toward AI development and infrastructure faces inherent limitations as an ongoing economic stimulus. This reality does not argue against infrastructure investment, but rather for calibrated expectations about its short and long term macroeconomic impact and for complementary policies that maximize domestic value creation where possible. Infrastructure represents an investment in the foundational inputs that enable AI and future AI development, with direct GDP impacts occurring in the near term. Data centres themselves do not directly contribute to ongoing productivity growth; they increase the total raw computing capacity. How that capacity is used and deployed determines the longer-term growth and productivity impacts.

The government has a significant role to play in developing its own internal capacity for AI development and adoption, as well as ensuring reasonable access to computing and data resources across economic sectors and business sizes (in particular, for non-profit social enterprises and academic research). The latter objective will be achieved through a combination of policies, including direct spending on infrastructure, funding for low-cost and/or targeted borrowing programs to encourage business investment, and accessibility for small, social and non-profit enterprises. In addition, complementary development of open data assets and investment in initiatives that aggregate high-value administrative and public sector data assets while maintaining appropriate privacy and quality controls would enable AI development activities and improve accessibility for smaller- and lower-resource enterprises.23

Since large companies are already making significant investments in AI infrastructure around the globe, government policy should target improving Canada’s attractiveness for that investment and development. For example, in November 2025, the government tabled Bill C-15. It includes accelerated capital cost allowance and expensing measures. The proposed measures are time-limited and include immediate capital cost deductions for certain productivity-enhancing assets.24 In addition to investment, data centres require land, building/permit approvals, and potentially environmental, energy, and Indigenous impact assessments (and the skilled labour to construct them after approvals). Once they are built, they require significant energy resources for ongoing operations.

A comprehensive strategy will require all levels of government to streamline regulatory approvals and, where possible, implement parallel instead of sequential regulatory steps to speed development timelines. Reducing administrative development costs and shortening approval timelines would benefit general economic development, but could take significant time to implement. In the short term, a targeted strategy could involve identifying suitable development sites at the local or regional level and pre-screening them for energy capacity, required environmental impact assessments, and other considerations.

Adoption and Diffusion

Adoption-focused policy, by contrast, may offer marginal immediate benefits but has higher potential to generate productivity growth in the long term.25 The analysis of business intentions to use AI in the future shows that the majority do not think it is currently relevant to their products or services. Further, the limited data available shows AI adoption across industries is slowing and could possibly decline in five industries over the year.

For companies that do think AI could be relevant to their business but do not plan to use AI, the most commonly cited reasons are a lack of knowledge about the technology, concerns about privacy and security, and that the technology is not yet mature enough. Manufacturing and wholesale trade, in particular, show a lower proportion of firms thinking that AI is not relevant to their products/services, and over 10 percent of firms are not planning to use AI due to a lack of skilled labour.

Given the relatively early stage of adoption and development, these barriers provide a target for government policy intervention. In particular, the government has signalled that it plans to update Canada’s privacy legislation for AI, and it should do so sooner rather than later. An updated privacy policy could have the dual benefit of reducing the uncertainty of potential adopters and providing clarity for companies planning to develop AI technologies using Canadian data.26 Similarly, governments have a role to play in increasing AI literacy and knowledge about the technology (see C.D. Howe Institute, forthcoming). Accelerating the adoption and development of AI in Canadian businesses is also supported by various general and specific tax subsidies, including the Scientific Research and Experimental Development (SR&ED) credit and capital cost allowances discussed above. While employee-training costs are generally tax-deductible, there is a need for a more comprehensive skills investment strategy, particularly in content development and availability for mid-career professionals, with a focus on practical applications. Increasing the labour force capable of implementing AI solutions across different industries is a critical complement to infrastructure investment and to encourage broad adoption and process innovation.

The evidence presented in this analysis shows that adoption in Canada generally follows international patterns – larger and younger firms tend to adopt AI at higher rates. This highlights that targeted policies encouraging technology uptake and growth in small and medium-sized enterprises could speed diffusion across sectors. In addition, it appears that engaging in international trade is associated with higher use of AI, though the intended future use is increasing for companies with no international business activities. In November of 2025, Canada, Australia, and India agreed to enter into a trilateral technology and innovation partnership that includes green energy, resilient supply chains for critical minerals, and the development and mass adoption of AI to improve welfare. Canada should continue to collaboratively secure critical input supply chains and enhance international cooperation on AI development and adoption.

The findings in this Commentary suggest that policies promoting trade diversification and encouraging a broader base of Canadian businesses to engage internationally are interrelated with AI adoption goals. Rather than viewing AI policy in isolation, governments should recognize how existing priorities around business growth, trade promotion, and export development can reinforce technology adoption objectives.

Conclusion

The uncertainty about AI’s development trajectory must be balanced with its potential to be revolutionary and a significant source of ever-elusive productivity growth. The country cannot afford to miss an opportunity that may substantially improve living standards and economic competitiveness over time. On the other hand, the uncertainty surrounding AI’s ultimate productivity impact – credible estimates range from less than 1 percent to 15 percent cumulative GDP gains over the next decade – counsels against excessive concentration of public resources. This suggests a multi-pronged strategic approach that includes investment in infrastructure, enhancing research and development policy,27 and updating privacy legislation. In addition, the government needs to rapidly implement the TechStat initiative to improve monitoring of the diffusion of AI and the associated economic effects. Many policies supporting AI infrastructure, development, and adoption are in the works, while data to monitor their effects is highly limited. The evidence base needs to expand to inform comprehensive and coordinated policy.

Policy should continue to pursue a balanced approach: support for infrastructure development and research capacity to maintain Canada’s standing as a credible AI nation, combined with robust efforts to accelerate adoption across sectors and firm sizes. However, governments should resist the temptation to overemphasize AI at the expense of broader economic priorities.28 Government policy can support AI development and diffusion without direct spending by focusing on a supportive regulatory environment based on principles to manage risk and potential harms, and some stimulus in the form of demand-side policies focusing on potential adopters.29 AI represents one important element of Canada’s productivity agenda, but not its entirety. Maintaining perspective on AI’s current limitations and its uncertain trajectory will help ensure that policy balances risks and limited government resources while remaining responsive to emerging evidence.

The author extends gratitude to Peter MacKenzie, Anindya Sen, Daniel Schwanen, Andrew Sharpe, Tingting Zhang, and several anonymous referees for valuable comments and suggestions. The author retains responsibility for any errors and the views expressed.

Appendix:

REFERENCES

Acemoglu, Daron. 2024. “The Simple Macroeconomics of AI.” NBER Working Paper 32487. MIT. https://economics.mit.edu/sites/default/files/2024-04/The%20Simple%20Macroeconomics%20of%20AI.pdf

Acemoglu, D., and P. Restrepo. 2019. “Automation and New Tasks: How Technology Displaces and Reinstates Labor.” Journal of Economic Perspectives 33(2): 3–30.

Agrawal, A., J. Gans, and A. Goldfarb. 2023. “Similarities and Differences in the Adoption of General Purpose Technologies.” NBER Working Paper 30976.

Aldasoro, Inaki, Leonardo Gambacorta, Rozália Pál, Debora Revoltella, Christoph Weiss, and Marcin Wolski. 2026. “How AI Is Affecting Productivity and Jobs in Europe.” https://cepr.org/voxeu/columns/how-ai-affecting-productivity-and-jobs-europe

Aliaga, S. 2025. “Is AI Already Driving U.S. Growth?” J.P. Morgan Asset Management. https://am.jpmorgan.com/us/en/asset-management/adv/insights/market-insights/market-updates/on-the-minds-of-investors/is-ai-already-driving-us-growth/

Andre, C., and P. Gal. 2024. “Reviving Productivity Growth: A Review of Policies.” OECD Economics Department Working Paper 1822. https://www.oecd.org/content/dam/oecd/en/publications/reports/2024/10/reviving-productivity-growth_936a1da3/61244acd-en.pdf

Appel, R., P. McCrory, A. Tamkin, M. McCain, T. Nayloen, and M. Stern. 2025. “The Anthropic Economic Index Report: Uneven Geographic and Enterprise AI Adoption.” Anthropic. https://www.anthropic.com/research/anthropic-economic-index-september-2025-report

Arnon, A. 2025. “The Projected Impact of Generative AI on Future Productivity Growth.” Penn Wharton Budget Model, University of Pennsylvania. https://budgetmodel.wharton.upenn.edu/p/2025-09-08-the-projected-impact-of-generative-ai-on-future-productivity-growth/

Baily, M., E. Brynjolfsson, and A. Korinek. 2023. “Machines of Mind: The Case for an AI-Powered Productivity Boom.” Brookings. https://www.brookings.edu/articles/machines-of-mind-the-casefor-an-ai-powered-productivity-boo

Barnette, C., and M. Peterson. 2025. “Are We in a Bubble? The AI Boom in Context.” BlackRock Advisor Centre. https://www.blackrock.com/us/financial-professionals/insights/ai-tech-bubble

Bureau of Economic Analysis (BEA). 2025. “Gross Domestic Product, 3rd Quarter 2025 (Initial Estimate) and Corporate Profits (Preliminary).” https://www.bea.gov/index.php/news/2025/gross-domestic-product-3rd-quarter-2025-initial-estimate-and-corporate-profits

Bick, A., A. Blandin, and D. Deming. 2024. “The Rapid Adoption of Generative AI.” NBER Working Paper 32966. https://www.nber.org/system/files/working_papers/w32966/w32966.pdf

Blit, J. 2025. Replace, Reimagine, Recombine: Building an AI Nation to Fix Canada’s Productivity Crisis. https://www.uwaterloo.ca/scholar/sites/ca.scholar/files/jblit/files/blit_ai_3rs.pdf

BNP Paribas. 2025. “The AI Value Gap: Boom or Bust?” https://globalmarkets.cib.bnpparibas/the-ai-value-gap-boom-or-bust/

Boussour, L. 2025. “AI-Powered Growth: GenAI as a New Engine of U.S. Economic Performance.” EY Parthenon. November 17. https://www.ey.com/en_us/insights/ai/ai-powered-growth

Bryan, V., S. Sood, and C. Johnston. 2025. “Analysis on Artificial Intelligence Use by Businesses in Canada, Second Quarter of 2025.” Statistics Canada. https://www150.statcan.gc.ca/n1/pub/11-621-m/11-621-m2025008-eng.htm

————. 2025a. “Analysis on Expected Use of Artificial Intelligence by Businesses in Canada, Third Quarter of 2025.” Statistics Canada. https://www150.statcan.gc.ca/n1/pub/11-621-m/11-621-m2025011-eng.htm

Brynjolfsson, Erik, B. Chandar, and R. Chen. 2025. “Canaries in the Coal Mine? Six Facts about the Recent Employment Effects of Artificial Intelligence.” https://digitaleconomy.stanford.edu/app/uploads/2025/11/CanariesintheCoalMine_Nov25.pdf

Brynjolfsson, E., A. Collis, E. Diewert, F. Eggers, and K. Fox. 2023. “GDP-B: Accounting for the Value of New and Free Goods.” https://datascience.stanford.edu/sites/g/files/sbiybj25376/files/media/file/gdp-b-2023-10.pdf

Brynjolfsson, Erik, Daniel Rock, and Chad Syverson. 2021. “The Productivity J-Curve: How Intangibles Complement General Purpose Technologies.” American Economic Journal: Macroeconomics 13(1): 333–372.

Caamaño-Gordillo, D., J. Mula, and R. de la Torre. 2025. “Impact of Generative Artificial Intelligence on Workload, Efficiency and Labour Productivity.” IFAC-PapersOnline 59(10). https://www.sciencedirect.com/science/article/pii/S240589632500998X

Calvino, F., and L. Fontanelli. 2023. “A Portrait of AI Adopters across Countries: Firm Characteristics, Assets’ Complementarities and Productivity.” OECD Science, Technology and Industry Working Paper. https://www.oecd.org/content/dam/oecd/en/publications/reports/2023/04/a-portrait-of-ai-adopters-across-countries_76004dec/0fb79bb9-en.pdf

Challapally, A., C. Pease, R. Raskar, and P. Chari. 2025. “The GenAI Divide: State of AI in Business 2025.” MIT. https://mlq.ai/media/quarterly_decks/v0.1_State_of_AI_in_Business_2025_Report.pdf

Chatterji, Aaron, Thomas Cunningham, David Deming, Zoe Hitzig, Christopher Ong, Carl Yan Shan, and Kevin Wadman. 2025. “How People Use ChatGPT.” NBER Working Paper 34255. September. https://www.nber.org/papers/w34255

Corrado, C., J. Haskel, M. Iommi, C. Jona-Lasinio, and F. Bontadini. 2025. “Data Intangible Capital and Productivity.” In Technology, Productivity, and Economic Growth. NBER Studies in Income and Wealth, Volume 83. https://www.nber.org/books-and-chapters/technology-productivity-and-economic-growth/data-intangible-capital-and-productivity

Coupé, Tom, and Weilun Wu. 2025. “The Impact of Generative AI on Productivity: Results of an Early Meta-Analysis.” Working Papers in Economics 25/09. University of Canterbury, Department of Economics and Finance. https://ideas.repec.org/p/cbt/econwp/25-09.html

Diewert, E., K. Fox, and P. Schreyer. 2018. “The Digital Economy, New Products and Consumer Welfare.” ESCoE Discussion Paper. https://ideas.repec.org/p/nsr/escoed/escoe-dp-2018-16.html

Eloundou, Tyna, Sam Manning, Pamela Mishkin, and Daniel Rock. 2023. “GPTs are GPTs: An Early Look at the Labor Market Impact Potential of Large Language Models.” arXiv.

EY Global. 2025. “Canada Tables Bill with Accelerated Capital Cost Allowance and Other Immediate Expensing Measures.” December 18. https://www.ey.com/en_gl/technical/tax-alerts/canada-tables-bill-with-accelerated-capital-cost-allowance-and-other-immediate-expensing-measures

Filippucci, Francesco et al. 2024. “The Impact of Artificial Intelligence on Productivity, Distribution and Growth: Key Mechanisms, Initial Evidence and Policy Challenges.” OECD Artificial Intelligence Papers No. 15. Paris: OECD.

Filippucci, Francesco, Peter Gal, Katharina Laengle, and Matthias Schief. 2025. “Macroeconomic Productivity Gains from Artificial Intelligence in G7 Economies.” OECD Working Paper. https://www.oecd.org/en/publications/macroeconomic-productivity-gains-from-artificial-intelligence-in-g7-economies_a5319ab5-en.html

Filippucci, F., P. Gal, K. Laengle, M. Schief, and F. Unsal. 2025. “Opportunities and Risks of Artificial Intelligence for Productivity.” International Productivity Monitor 48. https://www.csls.ca/ipm/48/OECD_Final.pdf

First Page Sage. 2025. “ChatGPT Usage Statistics: December 2025.” https://firstpagesage.com/seo-blog/chatgpt-usage-statistics/

Global Center on AI Governance (GCG). 2024. The Global Index on Responsible AI. https://www.global-index.ai/

Goldin, I. et al. 2024. “Why Is Productivity Slowing Down?” Journal of Economic Literature 62(1): 196–268.

Goldman Sachs. 2023. “Generative AI Could Raise Global GDP by 7 Percent.” https://www.goldmansachs.com/intelligence/pages/generative-ai-could-raise-global-gdp-by-7-percent.html

————. 2025. “Why AI Companies May Invest More Than $500 Billion in 2026.” https://www.goldmansachs.com/insights/articles/why-ai-companies-may-invest-more-than-500-billion-in-2026

Greenwood, Jeremy, and Mehmet Yorukoglu. 1997. “1974.” In Carnegie-Rochester Conference Series on Public Policy 46: 49–95.

Gruenwald, P., and S. Panday. 2025. “How Data Centres and AI Are Becoming a New Engine of Growth.” World Economic Forum. December 17. https://www.weforum.org/stories/2025/12/data-centres-and-ai-new-growth-engine/

Harberger, A.C. 1998. “A Vision of the Growth Process.” American Economic Review 88(1): 1–32.

Heneseke, G., R. Davies, A. Felstead, D. Gallie, F. Green, and Y. Zhou. 2025. “How Exposed Are UK Jobs to Generative AI? Developing and Applying a Novel Task-Based Index.” https://arxiv.org/pdf/2507.22748

Howitt, P. 2015. Mushrooms and Yeast: The Implications of Technological Progress for Canada’s Economic Growth. Commentary 433. Toronto: C.D. Howe Institute. https://cdhowe.org/publication/mushrooms-and-yeast-implications-technological-progress-canadas-economic/

Iida, K., S. Rahim, C. Hutber, G. Grau, C. Moss, and P. Douglas. 2025. Government AI Readiness Index 2025. Oxford Insights. https://oxfordinsights.com/wp-content/uploads/2026/01/2025-Government-AI-Readiness-Index-Report_01_26.pdf

Jung, C., and B.S. Desikan. 2024. “Transformed by AI: How Generative Intelligence Could Affect Work in the UK and How to Manage It.” Institute for Public Policy Research. https://ippr-org.files.svdcdn.com/production/Downloads/Transformed_by_AI_March24_2024-03-27-121003_kxis.pdf

Lehdonvirta, V., B. Wu, Z. Hawkins, C. Caira, and L. Russo. 2025. “Measuring Domestic Public Cloud Compute Availability for Artificial Intelligence.” OECD Artificial Intelligence Papers. October. https://www.oecd.org/content/dam/oecd/en/publications/reports/2025/10/measuring-domestic-public-cloud-compute-availability-for-artificial-intelligence_39fa6b0e/8602a322-en.pdf

Levin, A. 2025. “The AI Investment Boom Continues to Drive GDP Growth.” Barron’s. https://www.barrons.com/articles/duolingo-stock-price-ai-picks-2026-08cab284

Maslej, Nestor, Loredana Fattorini, Raymond Perrault, Yolanda Gil, Vanessa Parli, Njenga Kariuki, Emily Capstick, Anka Reuel, Erik Brynjolfsson, John Etchemendy, Katrina Ligett, Terah Lyons, James Manyika, Juan Carlos Niebles, Yoav Shoham, Russell Wald, Tobi Walsh, Armin Hamrah, Lapo Santarlasci, Julia Betts Lotufo, Alexandra Rome, Andrew Shi, and Sukrut Oak. 2025. The AI Index 2025 Annual Report. Stanford, CA: Institute for Human-Centered AI, Stanford University. April.

McElheran, K. et al. 2023. “AI Adoption in America: Who, What, and Where.” NBER Working Paper 31788.

Narayanan, Arvind, and Sayesh Kapoor. 2024. AI Snake Oil: What Artificial Intelligence Can Do, What It Can’t and How to Tell the Difference. Princeton: Princeton University Press. https://press.princeton.edu/books/ebook/9780691249643/ai-snake-oil-pdf

OECD. 2024. “Recommendations of the Council on Artificial Intelligence.” OECD Legal Instruments. https://legalinstruments.oecd.org/en/instruments/OECD-LEGAL-0449

————. 2025. “AI Adoption by Small and Medium-Sized Enterprises.” OECD Discussion Paper for the G7. https://www.oecd.org/content/dam/oecd/en/publications/reports/2025/12/ai-adoption-by-small-and-medium-sized-enterprises_9c48eae6/426399c1-en.pdf

OECD.AI. 2026a. Data from Preqin, last updated January 5, 2026. https://oecd.ai/

————. 2026b. Data from OpenAlex, last updated September 30, 2025. https://oecd.ai/

OECD/BCG/INSEAD. 2025. The Adoption of Artificial Intelligence in Firms: New Evidence for Policymaking. Paris: OECD Publishing. https://doi.org/10.1787/f9ef33c3-en

OECD. 2026. ICT Access and Usage Database. January.

Oschinski, M., and R. Wyonch. 2017. Future Shock? The Impact of Automation on Canada’s Labour Market. Commentary 472. Toronto: C.D. Howe Institute. https://cdhowe.org/wp-content/uploads/2024/12/Update_Commentary2047220web.pdf

Paoli, N. 2025. “Construction Workers Are Earning Up to 30% More and Some Are Nabbing Six-Figure Salaries in the Data Center Boom.” Fortune. December 5. https://fortune.com/2025/12/05/construction-workers-earning-six-figure-salaries-data-center-boom-ai-tech/

Rogerson, A., E. Hankins, P.F. Nettel, and S. Rahim. 2022. Government AI Readiness Index 2022. Oxford: Oxford Insights. https://oxfordinsights.com/wp-content/uploads/2023/11/Government_AI_Readiness_2022_FV.pdf

Schumpeter, J. 1942. Capitalism, Socialism, and Democracy. New York: Harper and Brothers.

Sen, A. 2026. The Missing Pillar of Canada’s AI Strategy: Data Supply Chains. Commentary 706. Toronto: C.D. Howe Institute. https://cdhowe.org/publication/the-missing-pillar-of-canadas-ai-strategy-data-supply-chains/

Singh, Pia. 2026. “AI Spending Wasn’t the Biggest Engine of U.S. Economic Growth in 2025, Despite Popular Assumptions.” CNBC. https://www.cnbc.com/2026/01/26/ai-wasnt-the-biggest-engine-of-us-gdp-growth-in-2025.html

Smith, Brad. 2025. “Microsoft Deepens Its Commitment to Canada with Landmark $19B AI Investment.” Microsoft. https://blogs.microsoft.com/on-the-issues/2025/12/09/microsoft-deepens-its-commitment-to-canada-with-landmark-19b-ai-investment/

Top500. 2025. “November 2025.” https://top500.org/lists/top500/2025/11/

Uren, V., and J.S. Edwards. 2023. “Technology Readiness and the Organizational Journey towards AI Adoption: An Empirical Study.” International Journal of Information Management 68. https://www.sciencedirect.com/science/article/pii/S0268401222001220

White, J., and S. Cesareo. 2024. The Global Artificial Intelligence Index 2025. https://www.tortoisemedia.com/data/global-ai#rankings

World Bank. 2024. “Population Ages 15–64.” https://data.worldbank.org/indicator/SP.POP.1564.TO

Yotzov, Ivan, Jose Maria Barrero, Nicholas Bloom, Philip Bunn, Steven J. Davis, Kevin M. Foster, Aaron Jalca, Brent H. Meyer, Paul Mizen, Michael A. Navarrete, Pawel Smietanka, Gregory Thwaites, and Ben Zhe Wang. 2026. “Firm Data on AI.” NBER Working Paper 34836. https://www.nber.org/papers/w34836

Avril 2026 – Les investissements en IA ont atteint plusieurs centaines de milliards, mais les retombées économiques se font encore attendre. Malgré des investissements rapides et une expérimentation répandue des outils d’IA générative, les gains de productivité mesurables ne se reflètent pas encore clairement dans les données agrégées des économies avancées, ce qui soulève une question importante : qui sera le premier à transformer cette dynamique en gains tangibles?

Dans « From Hype to Output: How AI Investment Translates to Real Productivity Gains » (De l’engouement à la production : comment les investissements dans l’IA se traduisent par de réels gains de productivité), l’auteure Rosalie Wyonch constate que si l’IA offre une voie vers de meilleures performances économiques et pourrait contribuer à relever les défis de productivité auxquels le Canada est confronté depuis longtemps, la concrétisation de ces gains nécessitera des politiques favorisant son adoption et sa diffusion par les entreprises, lesquelles restent inégales dans la plupart des secteurs et des régions. Cela implique notamment de supprimer les obstacles, de garantir l’accès aux données et d’encourager l’intégration de l’IA au sein des entreprises et par tous les secteurs.